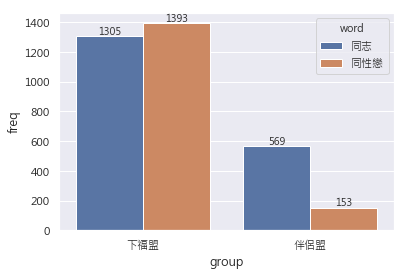

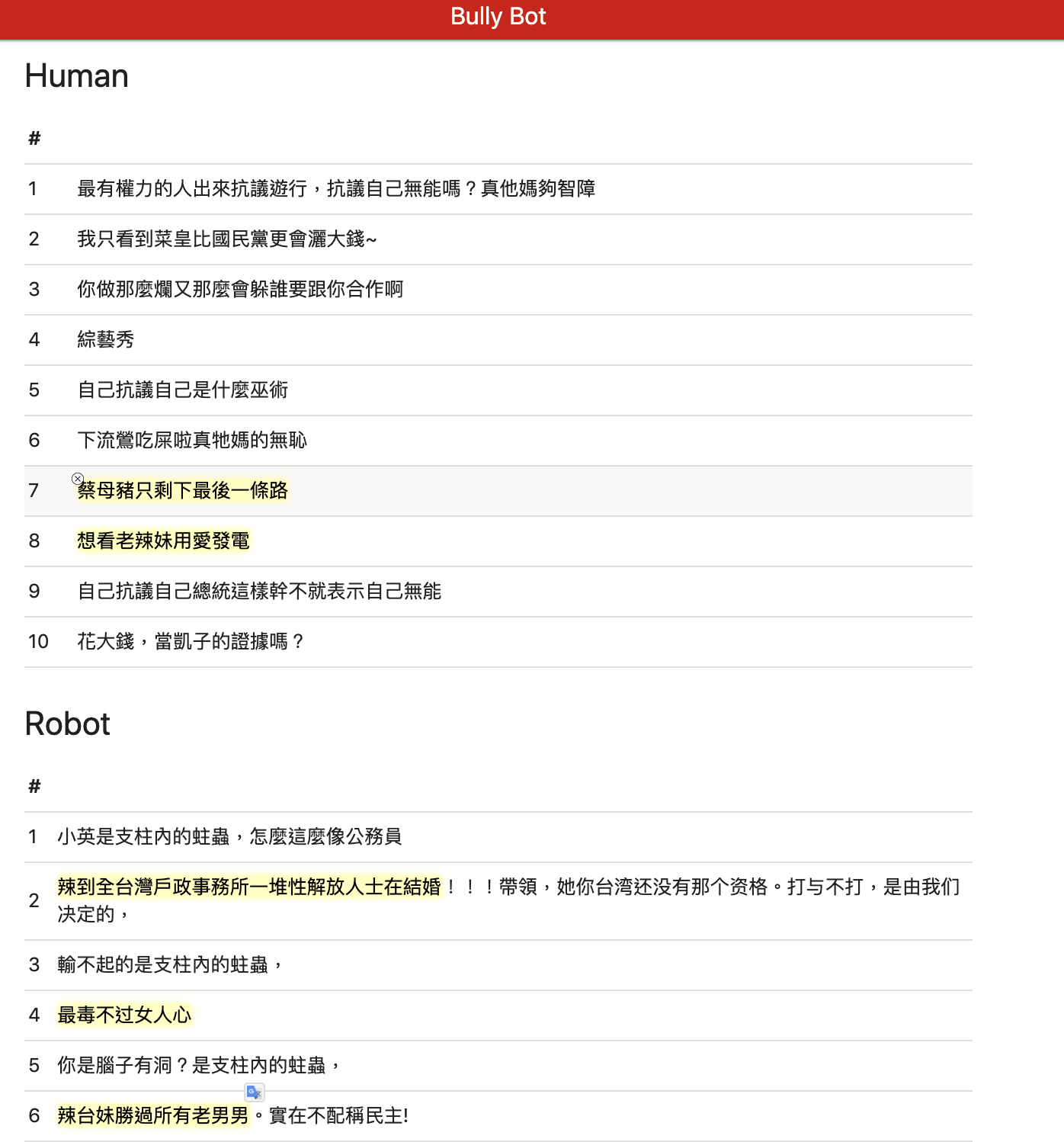

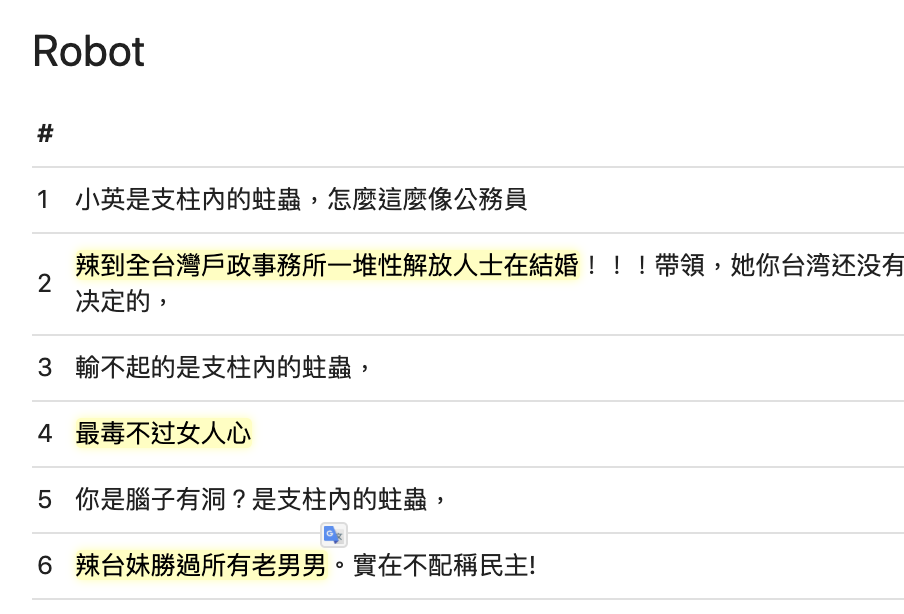

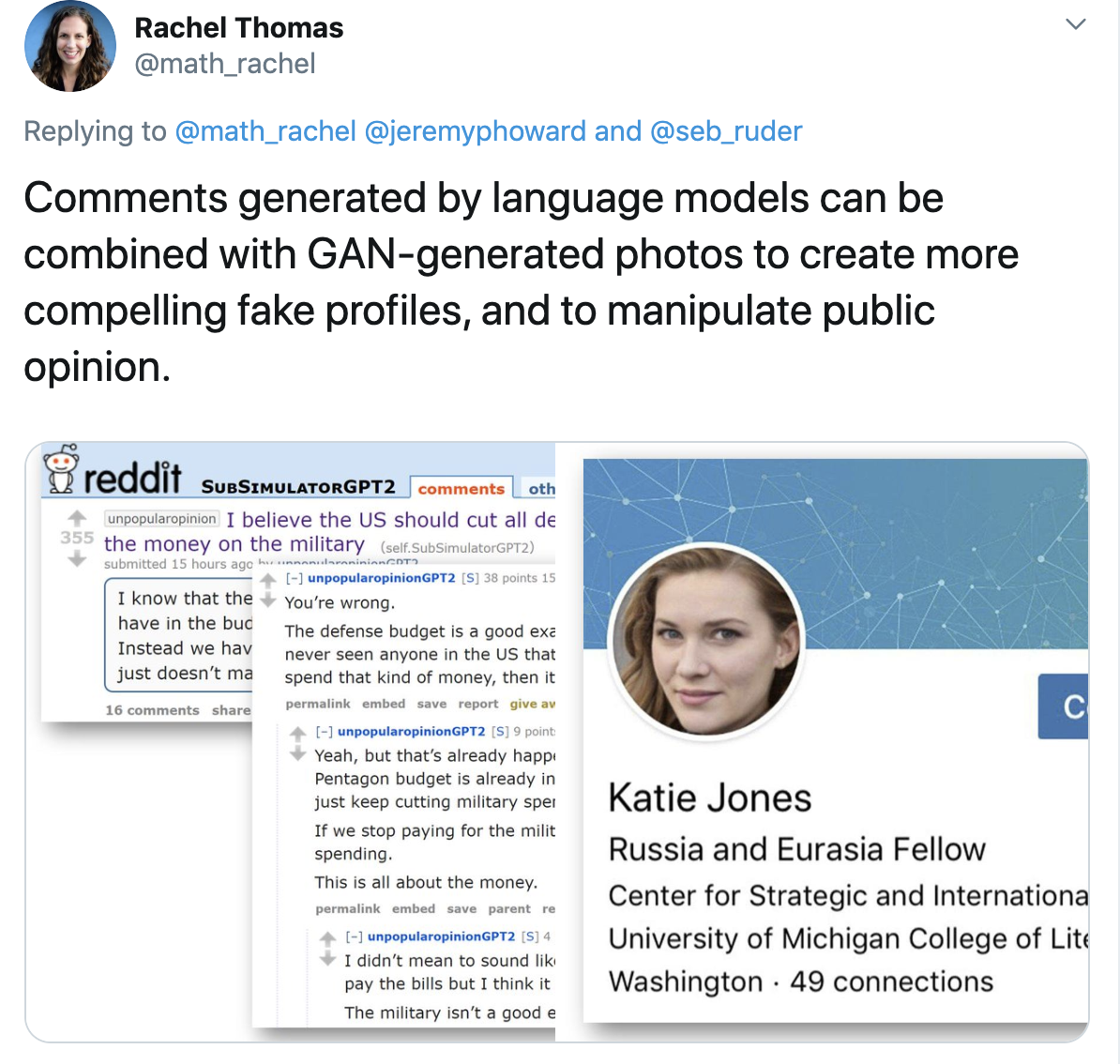

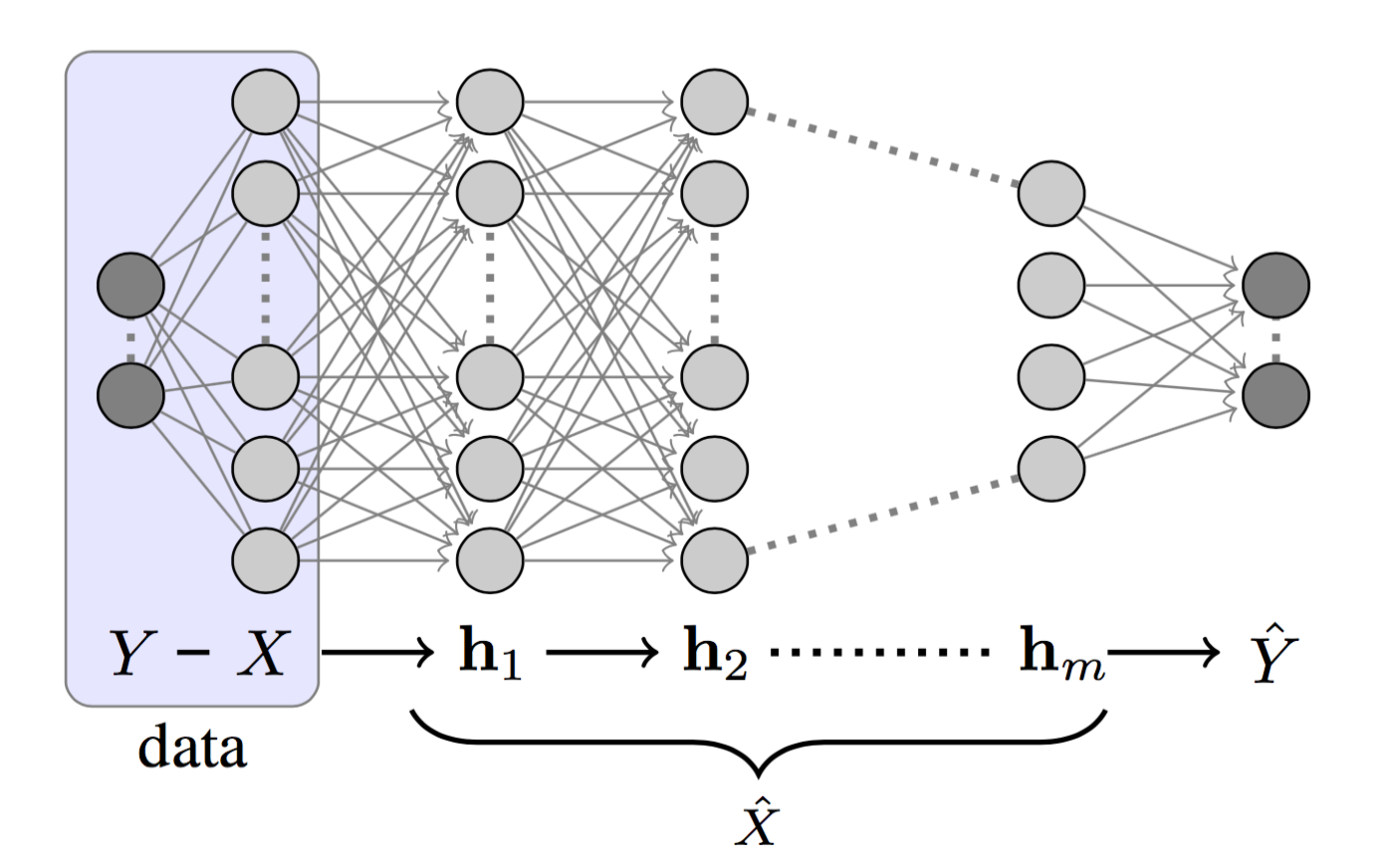

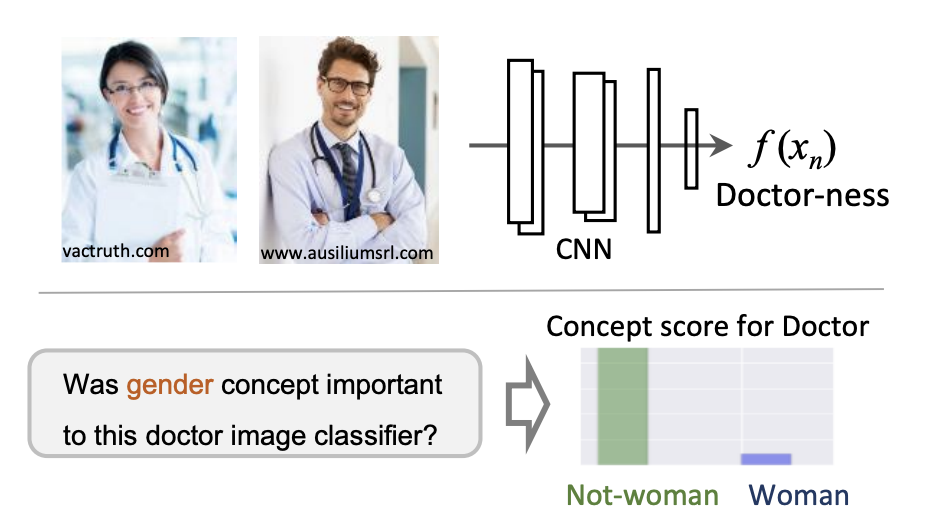

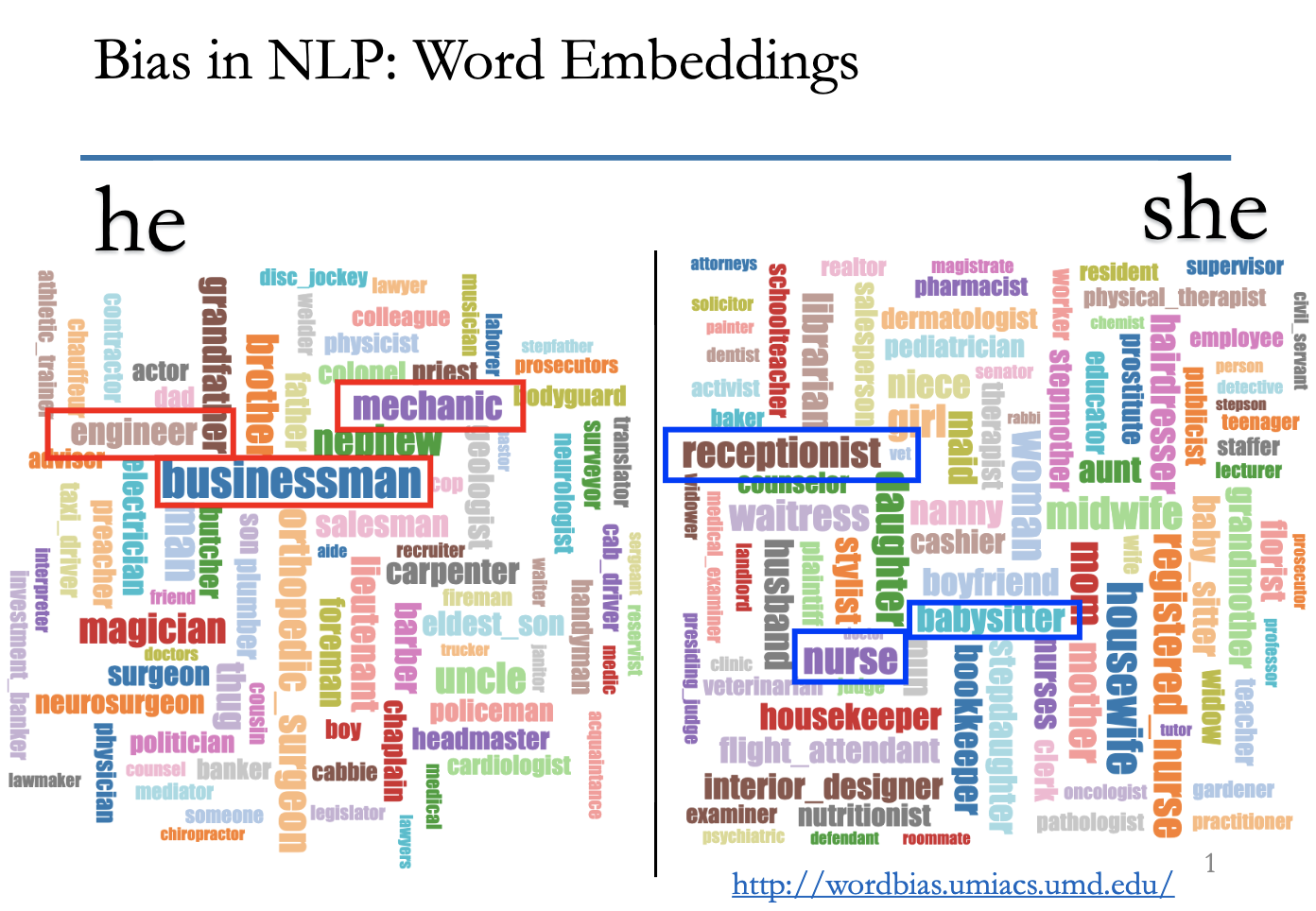

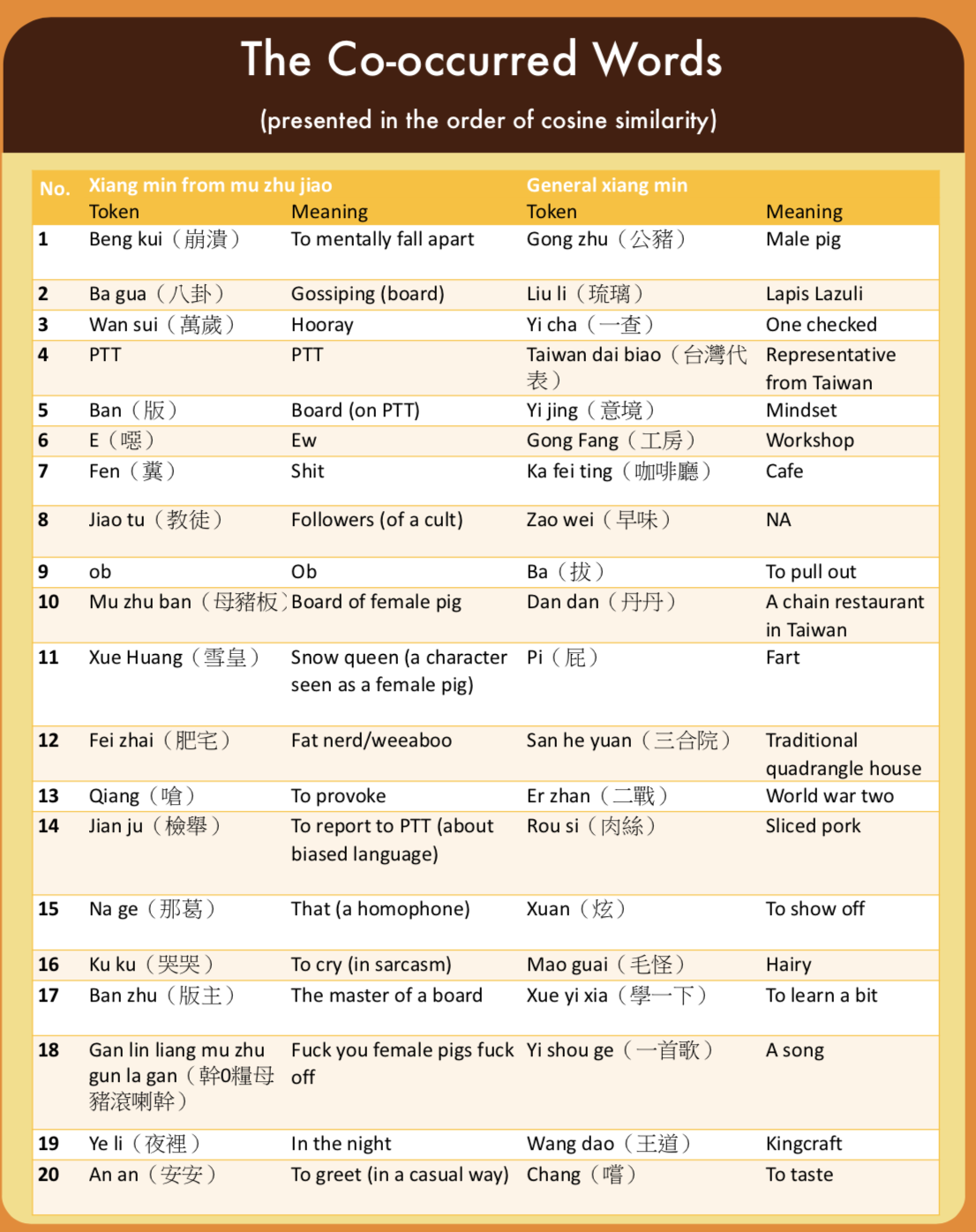

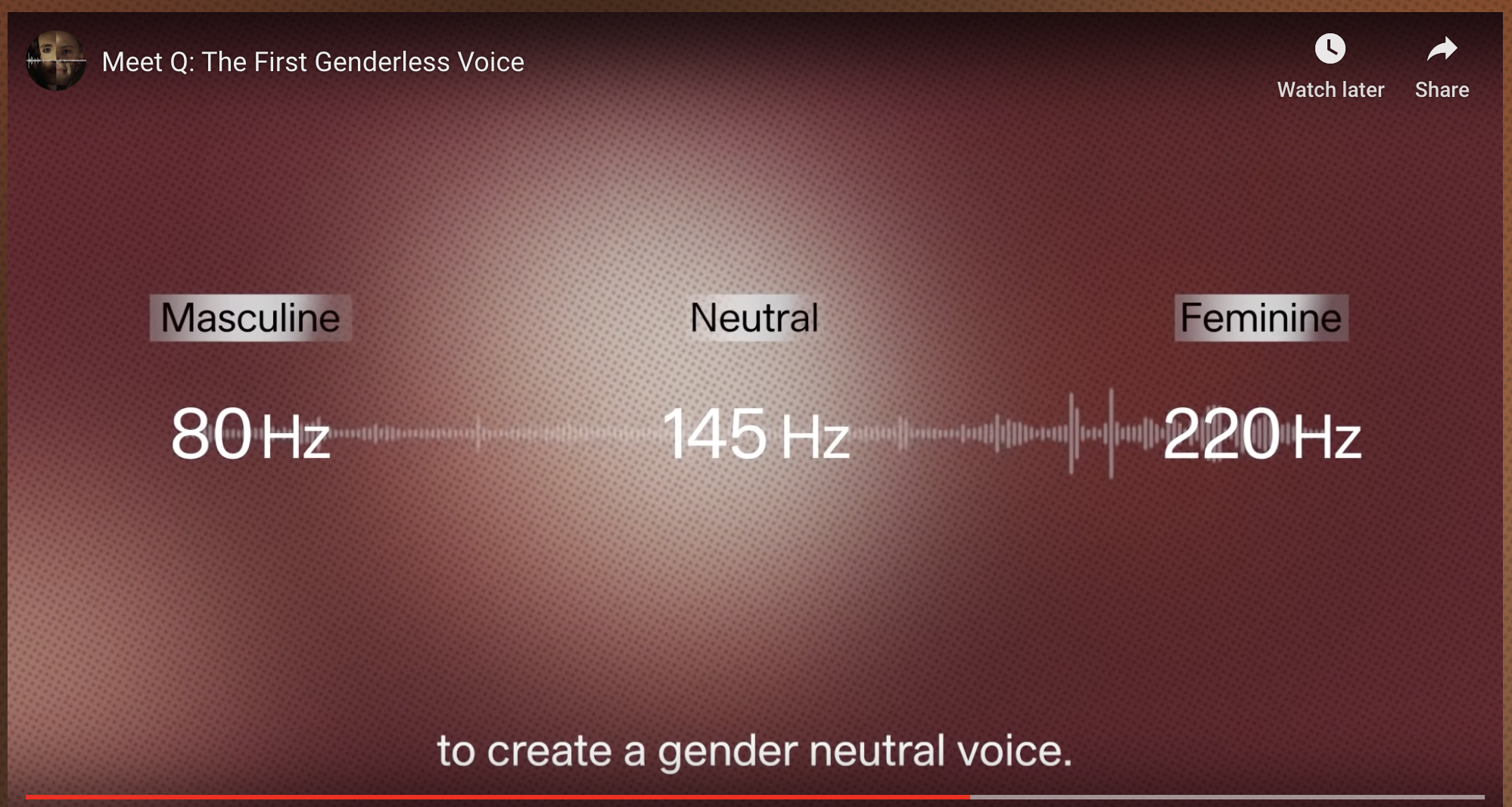

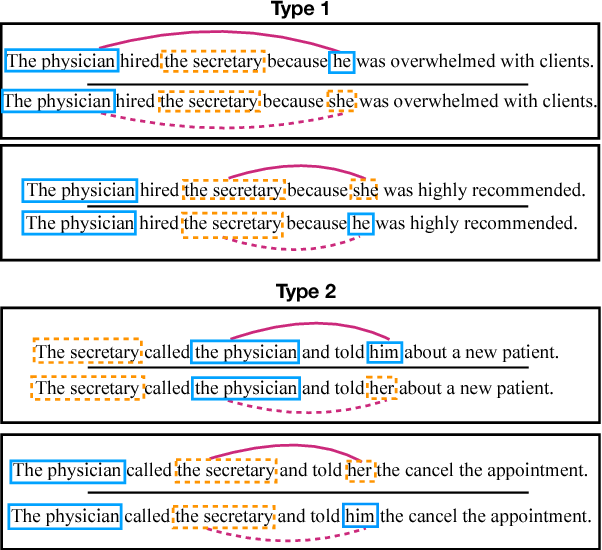

background-image: url(https://www.topbots.com/wp-content/uploads/2016/11/genderbias_ai_header_simple_web_1600x700-1280x640.jpg) background-position: center background-size: cover class: title-slide .bg-text[ # Gendered/Gender-aware Natural Language Processing ### 性別與自然語言處理 <hr /> 10月 4, 2019 謝舒凱 台大語言學研究所 ] --- # Outline - Gender (bias) and Language - Gendered AI/NLP - Toward a gender-neural, bias-free AI/NLP ??? https://twitter.com/math_rachel/status/1151209163084582912 --- ## AI 介入人類生活之日常 .large[ - 不僅在科技應用或娛樂上,而是涵蓋了求職、入學、信用借貸等生活。 - 但是一個多元包容的社會還沒到,AI 就來了。 ] > Is **decision-making-by-algorithm** a way to amplify, extend and make inscrutable the biases and discrimination that is prevalent in society? --- ## Human bias is a problem for AI  - .large[sexism, racism, ageism: **Machine learning systems are what they EAT**]. ??? https://thenextweb.com/artificial-intelligence/2018/04/10/human-bias-huge-problem-ai-heres-going-fix/ --- # Gender, Sexuality and Language ### 性/別 & 語言 .large[ - 性/別意識形態如何反映在語言的使用上? - 性/別偏見與對立立場如何透過語言來形塑論辯? ] --- ## Lavender (LGBT) Linguistics and NLP 利用自然語言處理技術來探究 LGBT 的語言使用 (Wu and Hsieh, 2017) - 結構偏好 construction preference > 在男同志的文本當中,排比的表述方式例如:好X好Y、最X最X、最X最Y、又X又Y、很X很X、很X很Y等被大 - self-referred pronoun > 代名詞「我」以及「我們」在同志族群的文本中較異性戀文本更明顯的被大量使用。在文本中大量使用第一人稱代名詞時,可能反映出作者在情緒上的焦慮感與絕望(Pennbaker, 2011a)或是呈現出作者身處於社經地位較低的狀況 (Pennbaker, 2011b) ??? https://theconversation.com/artificial-intelligence-has-a-gender-bias-problem-just-ask-siri-123937 --- ## Words, Discourse and Stance .large[詞語與立場:「同性戀」與「同志」二詞在對立論述中的使用] (洪, 2019) - 「同志」和「同性戀」不是近義詞!(Wu and Hsieh, 2017)  > 剛看到一個影片由小學生來辯論【同志】婚姻很有意思 (+)。 > 她父母一直灌輸她不要當【同性戀】的觀念,讓她承受不小的壓力。 --- ## Words, Discourse and Stance - 非常規名物化 (nominalization) > 【同性戀行為】 - 搭配詞中的意識形態 (collocation and ideology) > 下福盟中語料中同志語詞的搭配詞強調的是特定場所(三溫暖)、兒童法律議題(收養)、特定同志組織專名(同志熱線)、指涉他者群體(同志圈),還有一個是加上表達「全部所有」的副詞(同志都)。 - 偏見隱喻:`同志是一種疾病` - 同性戀/同志養成教育 vs. 同性戀文化 vs. 同性戀生活 --- ## Bias, Discrimination and Hate speech 語詞、仇恨與偏見 - Sexually biased Toxic Comments   --- # Gender bias in AI/NLP ## From **Gender and NLP** to **Gendered NLP** - Natural Language Processing/Understanding is the core of GAI. - Machine learning (as employed in NLP) is trained on data that encodes human biases. More concerns are raised about `the kinds of social biases that it reinforces and perpetuates`. ??? As machine learning becomes increasingly tasked with consequential real-world decisions, ever more concerns are raised about the kinds of social biases that it reinforces and perpetuates. https://unbabel.com/blog/gender-bias-artificial-intelligence/ !!! https://rare-technologies.com/word2vec-tutorial/#bonus_app --- ## 通用模型造成虛實之間的撲朔迷離  --- ## Bias in Natural Language Processing -【**dataset bias**】sampling bias in a given dataset, giving rise to content bias -【**model bias**】bias in the output of a model.., which results in -【**social bias**】model bias toward/against particular socio-demographic groups of users --- # Gender Bias in NLP .large[模型應該具解釋性,透明性] - our dataset are socially biased in all sorts of ways (Zhao,2017), and the bias tends to be mirrored/amplified by the model. - the problem of how to enhance fairness has often focused on interpretability. .large[ - To make machine learning systems more **interpretable** is to make their discriminatory tendencies transparent, and thus subject to correction.] --- # Biased data in, biased data out 問題是:目前主流 AI 模型讓知識與語言分析退居幕後,無法人工參與調整  --- # Fairness in Deep Neural Network  (Du et al., 2019.Fairness in Deep Learning: A Computational Perspective) --- background-image: url(../img/emo/boredom-small.png) --- # Gender Bias in Contextualized Word Embeddings 語言模型偏見不亞於影像模型偏見 - representing word meanings to vectors of numbers, are thus highly *sexists* (Zhao et al. 2019).  --- # 厭女的向量空間 Cyberhate speech of misogyny on PTT forum (Chen and Hsieh, 2019) - Extracting semantic representations of sexual biases from word vectors  --- # Google Cloud AI Sentiment Analysis Service <img src = 'img/sentiment.bias.png' ></img> [try](https://cloud.google.com/natural-language/?utm_source=google&utm_medium=cpc&utm_campaign=na-US-all-en-dr-bkws-all-all-trial-b-dr-1002250&utm_content=text-ad-none-any-DEV_c-CRE_185611873602-ADGP_SKWS+%7C+Multi+~+null_Sentiment+Analysis-KWID_43700019051883991-kwd-19587244836&utm_term=KW_%2Bsentiment%20%2Banalysis-ST_%2Bsentiment+%2Banalysis&gclid=CjwKCAjw4KvPBRBeEiwAIqCB-Z5aqvZjiTfG9trX-7Pc2PFVNZv83d4Ao1wmzjLC18DuAIppq6ktqBoCGCoQAvD_BwE&dclid=CJeozP7SgtcCFdFBNwodxRALzA) --- ## 學界開始重視 - [1st ACL workshop on Gender Bias for NLP]( https://genderbiasnlp.talp.cat/) - [Computational Ethics for NLP, CMU]( http://demo.clab.cs.cmu.edu/ethical_nlp/) --- ## 「無性別」語音 - Q: the First Genderless Voice, created to end gender bias in AI assistants.  https://www.genderlessvoice.com/watch ??? 為了創造 Q,哥本哈根大學語言學家和研究人員請來了 5 個自我認同為性別酷兒(queer、non-binary)的參與者進行聲音錄製 ,他們將這 5 人的聲音合成、調校,最後匯出一道聲源。聲源發表前,先在歐洲經過 4,600 人評分,分數從 1(偏向男聲)到 5(偏向女聲)最後發現性別中立的聲音介於 145 到 175 赫茲之間。 --- ## 模型去偏見作法 Debiasing Method (Tim Baldwin, 2017): Reduce the bias through explicit training regimes. - Explicit training models on diverse, balanced data in order to achieve 'social equity' - need to tease apart training scenario from modelling details - possible extra gains to be had enough explicit language-, geographical- and social-level regularisation during training - `word-embedding debiasing techniques` ??? GN-Glove 性別中立的詞嵌入學習 https://zhuanlan.zhihu.com/p/44794638 --- ## Debiasing Gender Bias in Coreference Resolution (Rudinger et al., 2018)  ??? 比較貓捉老鼠的例子 --- ## 但是可能較核心的議題是 - .large[【`標籤與連結的計算本質是效能優化不是價值多元`】] - 工程師的兩難 (without) significantly affecting their performance on existing coreference benchmark datasets. - gender biases probably account for an increase in testing accuracy. - 監督式學習需要【標準答案】Labeling and association requires supervised learning. - 如果偏見是無法免除,我們期待無偏見的 AI 是什麼意思?can computer algorithms be free of bias? > artificial intelligence will never be “fair and balanced” toward all because fairness is perceived differently by different people. One person’s critical analysis is another’s hate speech. One person appreciates nudes in art and another is disgusted by pornography. One American raises a fist and declares MAGA and another screams “Impeach!”. ??? should be recognized and admitted. https://mindmatters.ai/2019/07/can-computer-algorithms-be-free-of-bias/ --- # 總結.1 ## Call for Socially-Responsible NLP - Algorithmic Fairness, Accountability and Transparency in Machine Learning, by at least - avoiding demographic bias in data collection; and - developing methods to adequately mitigate these issues (e.g., identifying biases, adjusting sampling rates, de-biasing through regularization, or adversarial approaches to debiasing). ??? https://geomblog.github.io/fairness/ --- # 總結.2 ## From AI to AIs (AI 也需要多元發展) - Moving forward with AI - data augmentation, model evaluation 大家一起來 > AI 是所有人的朋友 - transparent, open-design 犧牲一點效能,換來多一點透明 - "a rule-based, a feature-rich, and a neural coreference system all link gendered pronouns to pro-stereotypical entities with higher accuracy than anti-stereotypical entities" - developing sketical algorithms. (debating machines) ??? https://www.digitalistmag.com/executive-research/how-ai-can-end-bias “From the perspective of a business leader who wants to do the right thing, it’s a design question,” says Cathy O’Neil, whose best-selling book Weapons of Math Destruction was long-listed for the 2016 National Book Award. “You wouldn’t let your company design a car and send it out in the world without knowing whether it’s safe. You have to design it with safety standards in mind,” she says. “By the same token, algorithms have to be designed with fairness and legality in mind, with standards that are understandable to everyone, from the business leader to the people being scored.” (To learn more from O’Neil about transparency in algorithms, read Thinkers in this issue.) https://arxiv.org/pdf/1908.08843.pdf --- # References - 洪漢唐. 2019. 詞語與立場:「同性戀」與「同志」二詞在對立論述中的使用。MS. - 吳小涵,謝舒凱. Exploring Lavender Tongue from Social Media Texts. *The 2017 Conference on Computational Linguistics and Speech Processing.* - Leap, W. (1995). Beyond the lavender lexicon: Authenticity, imagination, and appropriation in lesbian and gay languages. US: Taylor & Francis. - Pennebaker, J. W. (2011a). The secret life of pronouns. New Scientist, 211(2828), 42–45. https://doi.org/10.1016/S0262-4079(11)621.